Something has shifted in the way professionals talk about their careers. Not loudly — the shift is more often quiet, internal, and late at night. A creeping sense that the ground is moving. That the skills built over years may be worth less tomorrow. That staying still feels riskier than it ever did, but moving is hard when you are not sure which direction to move in.

AI anxiety at work is real, it is widespread, and it is poorly served by most of the content written about it. What professionals are mostly offered is either alarm — headlines competing to predict the most dramatic disruption — or hustle — listicles of tools to learn immediately, as if urgency and productivity were the appropriate response to genuine uncertainty.

This guide takes a different approach. It is not a guide to getting excited about AI. Nor is it a guide to surviving it. It is a guide to navigating AI disruption with the quality that research consistently shows matters most in periods of genuine uncertainty: calm, grounded clarity.

Empty space, drag to resize

A YouGov survey of 1,000 UK employees commissioned by the Access Group in February 2026 found that 46% of UK workers fear AI's impact on their jobs. Among 25–34 year olds, that figure rises to over 60%. Employees are twice as likely as HR leaders to name job loss as their primary AI concern — a striking gap that suggests most organisations are significantly underestimating what their people are experiencing.

1 Access Group / YouGov (February 2026). AI Workplace Report: UK Employee and HR Decision-Maker Survey. N=1,000 UK employees, 503 HR decision-makers. theaccessgroup.com

46% of UK workers fear AI's impact on their jobs

Access Group / YouGov, February 2026 — N=1,000 UK employees

This anxiety does not exist in a vacuum. Official UK government data confirms the structural changes that are generating it. A January 2026 assessment by HM Government found that UK job postings in high-AI-exposure roles fell by 38% between 2022 and 2025, with the effect concentrated in higher-salary, cognitive occupations — the roles that many professionals believed were insulated from automation.

The same government report noted that entry-level and graduate roles are among the most acutely affected, as AI absorbs the task-based work that has traditionally served as the on-ramp into professional careers.

So the anxiety is not irrational. It is a calibrated response to real signals. The question is not whether the signals are genuine — they are — but how to respond to them without the response becoming part of the problem.

2 HM Government / DSIT (January 2026). Assessment of AI Capabilities and the Impact on the UK Labour Market. gov.uk

"The anxiety is not the problem. What you do with it is the only thing you can control."

Empty space, drag to resize

AI anxiety at work is not a single, uniform experience. Research published in Frontiers in Psychology identifies at least nine distinct dimensions, ranging from general AI anxiety and technoparanoia to job-replacement anxiety and what researchers call "sociotechnical blindness" — the sense of not understanding the systems that are increasingly shaping your working life.

The study found that AI anxiety is driven more by psychological dispositions and the experience of uncertainty than by demographic factors. In other words, the professionals most affected are not defined by age, gender, or sector — they are defined by their relationship to uncertainty itself.

A newer term has emerged in 2026 that captures something important: FOBO — the Fear of Becoming Obsolete. Unlike the cruder fear of immediate redundancy, FOBO is subtler and more pervasive. It is the sense that skills are degrading in real time. That the gap between where you are and where you need to be is widening faster than you can close it. That the window for staying relevant is closing.

FOBO is particularly acute for mid-career professionals who have built expertise over years — and who now watch entry-level tasks, once the foundation of professional development, being automated away. The anxiety is not just about jobs. It is about identity, competence, and the question of what you are worth when the task-based components of your work can be replicated cheaply and instantly.

For senior professionals specifically, a related and practical question sits alongside this: not just what AI might take, but what you actually need to learn in response. That question has a more specific — and more manageable — answer than the general noise suggests.

The AI Skills Panic: What Senior Professionals Actually Need to Learn works through it.

If that identity question feels close to home, this article goes deeper:

Professional Identity in the Age of AI: The Question Nobody Is Asking "FOBO is not the fear of losing your job. It is the fear of becoming irrelevant — and it is quieter, and harder to name."

3 Saurombe, M.D. (February 2026). Algorithmic anxiety: AI, work, and the evolving psychological contract in digital discourse. Frontiers in Psychology, 17. doi:10.3389/fpsyg.2026.1745164

Empty space, drag to resize

The honest answer is that the research says several things simultaneously — some reassuring, some genuinely concerning, and most nuanced in ways that headlines do not convey.

Task automation, not job elimination, is the dominant near-term pattern. AI is more likely to automate specific tasks within a role than to eliminate the role entirely. This is meaningful — it means roles change, sometimes significantly — but it is different from the wholesale replacement that dominates public discourse.

The roles most exposed are those with high proportions of repetitive, pattern-based, on-screen tasks. Roles that require judgement, relationship management, contextual interpretation, physical presence, or ethical accountability are less exposed — not immune, but less exposed.

New roles are being created alongside those being displaced. The World Economic Forum projects approximately 92 million roles displaced globally by 2030, alongside 170 million new ones. The disruption is real; it is not unidirectional.

The speed of transition matters enormously and is genuinely unknown. A slow transition creates time for adaptation; a fast one does not. Current indicators suggest the pace is accelerating, but the endpoint and the timeline remain contested among economists and technologists with access to the same data.

The distribution of disruption is highly uneven. Entry-level roles, certain professional services, and knowledge work with high task-repetition are more exposed than others. Geography, sector, and specific role composition matter more than broad occupational labels.

For a closer look at what the research means specifically for experienced professionals:

Will AI Make My Experience Irrelevant? What the Research Actually Says "The question is not whether AI will change your work. It will. The question is whether your response to that change is driven by panic or by clarity."

Empty space, drag to resize

The professional response to AI anxiety that most content recommends is, implicitly or explicitly, urgency. Learn these tools. Upskill immediately. Stay ahead of the curve. The implicit message is that anxiety is the correct fuel for action — that you should be afraid, and that fear should drive you to consume more content, more courses, more productivity.

This framing has two problems. The first is practical: panic-driven upskilling produces shallow, fragmented learning that rarely translates to meaningful capability. Research on skill acquisition consistently shows that deliberate, contextualised practice in areas of genuine relevance outperforms anxious consumption of whatever is currently trending.

The practical question that follows — what is actually worth learning at a senior career stage, and what is generating noise — is addressed directly in

The AI Skills Panic: What Senior Professionals Actually Need to Learn.

The second problem is deeper: it treats the anxiety as something to be outrun rather than addressed. And anxiety that is outrun rather than processed tends to return —

often at 3am, often louder than before.

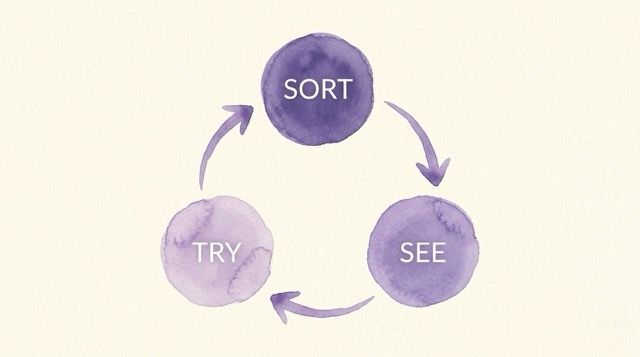

The calm alternative is not complacency. It is a different quality of attention. Instead of asking "what should I be learning right now?" — a question that tends to produce overwhelm — it asks three more grounded questions:

- What is actually within my control in this situation?

- What do I already have that is genuinely valuable?

- What is one concrete, manageable step I can take from here?

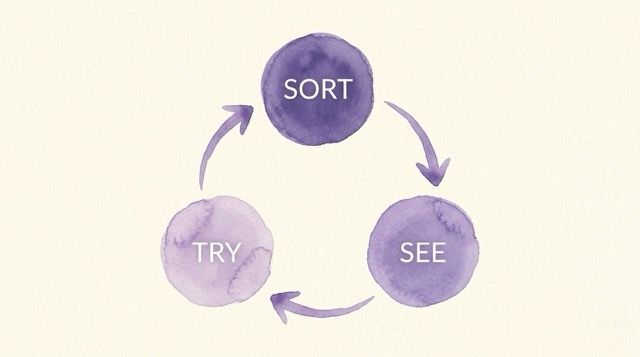

These questions map to what Embracing Imperfection Academy calls the Calm Action Cycle — a three-stage approach to navigating disruption without being driven by panic or paralysed by uncertainty.

| STAGE |

THE QUESTION |

WHAT THIS MEANS IN PRACTICE |

| SORT |

What is actually within my control here? |

Separate genuine, actionable concerns from background noise. Distinguish what you can act on from what you cannot — and stop spending energy on the latter. |

| SEE |

What do I already have that is genuinely valuable? |

Audit your real assets — skills, judgement, relationships, track record. Identify where you have more options than the anxiety suggests. See the fuller picture before deciding anything. |

| TRY |

What is one concrete, manageable step from here? |

Not a plan. One experiment. Small enough to start this week. Real enough to learn something from. Repeat. |

The

Calm Action Cycle is the throughline of everything that follows in this guide — and in the AI Anxiety Reset programme for professionals who want to go deeper.

Empty space, drag to resize

One of the most useful reframes available to professionals navigating AI disruption is moving from "what might AI replace?" to "what do I bring that AI structurally cannot?"

This is not wishful thinking. It is a genuine observation about the current and foreseeable limits of AI systems. The skills that remain distinctly human are not exotic or rare — they are the skills that most experienced professionals have been quietly developing for years.

AI systems are trained on patterns. They are significantly less capable of navigating genuinely novel situations, ethical complexity, or contexts where the right answer depends on human factors that are not captured in data. The professional who can read a room, navigate an ambiguous client relationship, or make a sound judgement under genuine uncertainty is not competing with AI — they are operating in a different register.

Trust, rapport, empathy, and the capacity to hold difficult conversations are not automatable in any meaningful sense. The professions that will be most resilient are those where the human relationship is the product — coaching, leadership, complex advisory work, healthcare, teaching. Even in roles that are not primarily relational, the ability to work effectively with other people remains a durable differentiator.

AI can generate text. It cannot tell your story. The capacity to communicate with genuine authority — to speak from lived experience, to make meaning of complexity, to persuade by credibility rather than pattern-matching — remains distinctly human. This is particularly relevant for professionals whose expertise is embedded in their career history rather than their most recent credential.

The most resilient professionals are not those who have learned the most, but those who have demonstrated they can learn well in new domains. A track record of successful adaptation is, in a disrupted market, more valuable than any specific current skill. The question worth asking is not "what do I know?" but "how quickly and well can I learn?"

"AI can replicate tasks. It cannot replicate you — your history, your judgement, your capacity to earn trust."

Empty space, drag to resize

Rather than a comprehensive upskilling plan — which tends to produce overwhelm rather than action — what follows is a more limited and more honest set of starting points.

Not all roles are equally affected. Before responding to AI disruption in general, it is worth understanding your specific situation. Which tasks in your current role are most repetitive and pattern-based? Which require contextual judgement or relationship management? This is not about reassuring yourself — it is about getting an accurate picture to work from, rather than responding to generalised alarm.

List what you have built over your career that is not task-specific: networks, domain knowledge, a track record of delivering under pressure, the ability to communicate credibly in your field. These assets do not disappear when tasks are automated. They often become more valuable, because they are the things AI cannot provide.

The most common mistake in response to AI anxiety is over-diversification — trying to learn too many things simultaneously in response to generalised fear. One area of genuine relevance to your work, pursued with real depth over three to six months, produces more durable capability than a year of fragmented consumption.

If AI anxiety is affecting your sleep, your concentration, or your sense of professional identity, the most productive thing you can do is address it as anxiety — not simply as an information deficit that more learning will resolve. The anxiety and the information gap are related but distinct. Both are worth attending to.

Read more: